AI in local councils: from resistance to change to 90-day pilot programs

Published October 2, 2025 · AI and Digitalization · Institutions

What is hindering the adoption of AI in local administrations, what changes with the AI Act, and how to move from doubts to measurable results with controlled 90-day pilot projects.

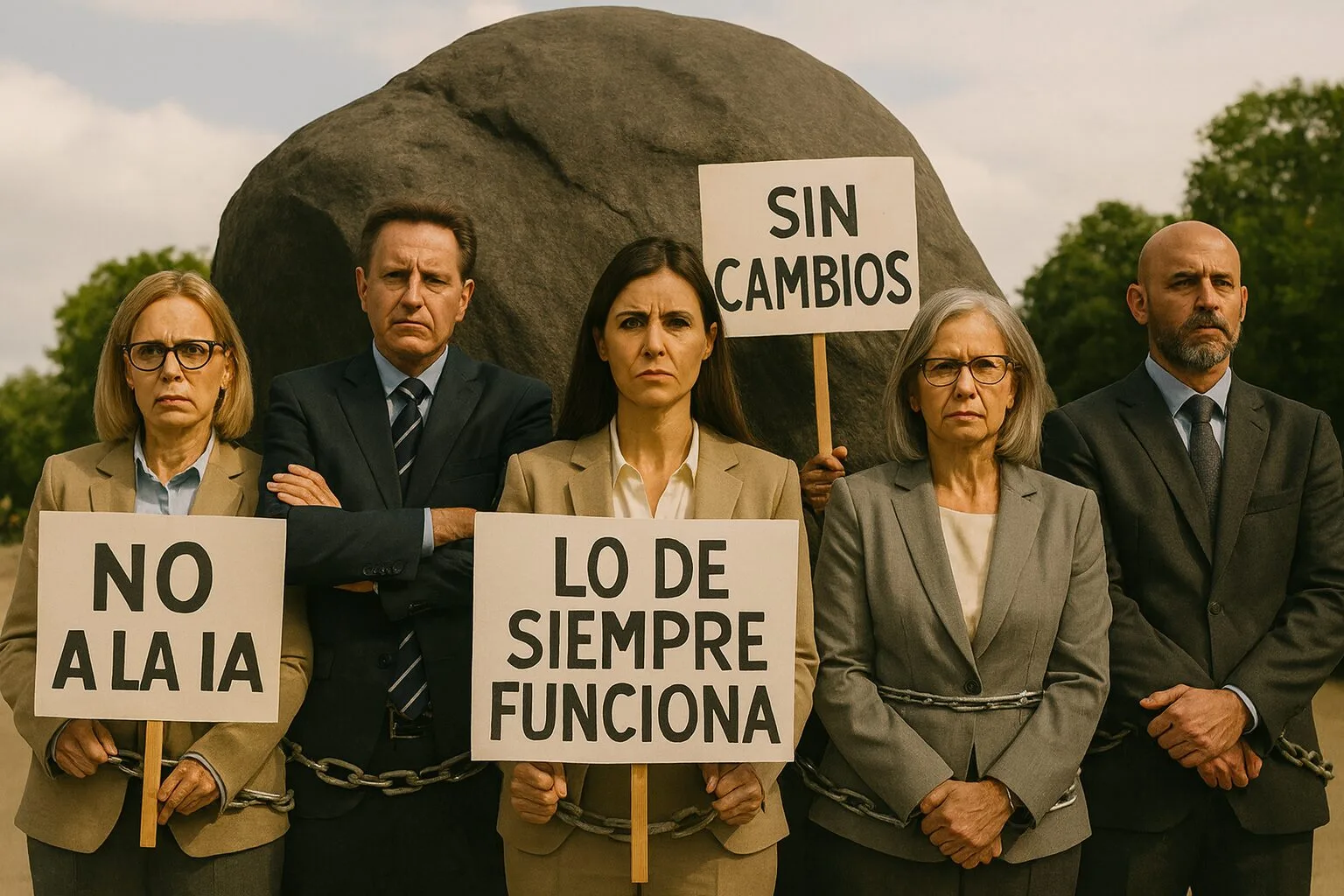

1. Why is AI adoption in local councils almost nonexistent today?

Although artificial intelligence has become a recurring theme in conferences, headlines, and political agendas, the reality is that Very few Spanish municipalities have moved from words to practice. Most remain at a passive observation point, without concrete projects or a defined roadmap.

- Budgetary constraintsInvestments in digitalization remain at the minimum required by regulations or subsidies. AI is perceived as a luxury, not an operational resource.

- Lack of internal technical profilesWithout staff who know the subject matter, dependence on external suppliers becomes a barrier to getting started.

- Cultural resistance: There is a persistent fear that AI will replace jobs or complicate bureaucracy instead of simplifying it.

- Saturation of prioritiesMobility, housing, cleaning, and security monopolize daily life and relegate innovation.

- Regulatory ignorance: until the approval of European AI Act, The legal framework was vague and encouraged inaction.

- “AI will take away public sector jobs.” → Well-designed automation transfers time from repetitive tasks to value-added tasks.

- “We don’t have enough data.” → Many pilot programs can start with data already available (files, incidents, citizen services), structuring it minimally.

- “This only works for big cities.” → Limited pilot programs work especially well in small teams due to their lesser coordination.

An additional brake comes from model of large consulting firms and some IT providersThree- to five-year plans, extensive documentation, and complex terminology. In theory, it sounds solid; in practice, It rarely goes beyond PowerPoint. The lack of resources, cultural resistance, and the pressures of daily life are blocking real deployment.

Focused projects, short deadlines, and measurable results to build trust before scaling up.

It's not about waiting to have a "grand plan"“, but rather to demonstrate in a matter of weeks that AI can free up time and solve repetitive tasks safely. That is the bridge between discourse and operational reality.

2. What changes with the AI Act and European regulation

He AI Act It is now law in the EU: it was published in the Official Journal in July 2024 and entered into force August 1, 2024. Its obligations are implemented in phases (prohibitions first, transparency and risk-based requirements later). For a clear and official overview, you can consult the European Parliament's summary. here.

- Clear prohibitions (e.g., social scoring to citizens or subliminal manipulation): should be ruled out from the beginning when designing pilots or purchases.

- Risk-based approach: if a case is of high risk (decisions that affect rights), requires risk management, data quality, records, human supervision and prior evaluation.

- Transparency in limited risk (chatbots, content generation): inform that "interacting with an AI" and when content has been synthesized.

- General Purpose Models (GPAI)Documentary obligations and codes of practice at EU level that should be required of suppliers.

| Before the AI Act | With the AI Act (phased application) | Practical implications for local councils |

|---|---|---|

| Blurry framework; doubts about what can be done in AI. | Prohibitions defined by law and implementation schedule. | Checklist of exclusions at the beginning of any RFI/RFP. |

| “One size fits all” in technology purchases. | Approach risk-based (high, limited, minimum) with escalating obligations. | Classify each case and require evidence of human control and supervision if applicable. |

| Optional transparency in chatbots and synthetic content. | Mandatory transparency for limited-risk AI. | Label AI interaction and provide notifications in generated content. |

| Little clarity on general purpose models (GPAI). | Documentary obligations and specific codes of practice. | Demand from suppliers GPAI documentation and adherence to codes. |

| Limited guidance on unacceptable practices. | Guides and FAQs from European institutions. | To train technicians and secretarial staff in what can't be done before evaluating pilots. |

- 2024: entry into force and start of the calendar.

- 2025: first obligations (prohibitions) and deployment of guides.

- 2026: most of the framework becomes enforceable.

In practice, this isn't about "stopping" AI projects, but about design them well: limited use cases, risk classification from the outset, transparency to the public, and evidence of human oversight. With this foundation, the 90-day pilot programs can be implemented with legal certainty and a results-oriented approach.

3. Practical cases where AI adds value without risk

Implementing AI in a city council doesn't require massive or futuristic projects. There are already simple, safe cases with immediate impact which can be deployed in weeks if they are properly focused.

Citizen services with supervised AI

A chatbot trained solely on official information from the city council and always Supervised by municipal staff, it resolves repetitive queries (schedules, procedures, telephones), freeing up time in customer service offices.

Intelligent search in files and regulations

AI models such as semantic search engines allow technicians and councilors to locate relevant articles, draft documents, and detect regulatory inconsistencies in minutes instead of hours.

Urban maintenance prediction

By analyzing incident patterns (lighting, trees, pavements), repairs are anticipated before they become major problems, moving from a reactive to a preventive approach.

Automatic incident classification

Citizen notifications via web or app are categorized and automatically assigned to the appropriate brigade (cleaning, mobility, lighting), reducing response times and improving traceability.

Municipal AI doesn't have to start with massive projects: the most useful pilots solve repetitive tasks, free up time, and are easily understood by citizens.

4. How to overcome cultural and technical barriers

While the AI Act has cleared up many doubts on the regulatory front, internally local councils continue to face very specific barriers that hinder the adoption of AI. These are not so much technological issues, but rather... people, culture and organization.

- Cultural resistance in teams: fear of job replacement, perception that AI “complicates more” instead of simplifying.

- Technical skills gap: few staff members with training in data, automation or AI; excessive dependence on external providers.

- Disordered processesFragmented documentation, non-integrated systems, and a lack of reliable digital inventories.

- Political fear: fear that an AI pilot will generate media criticism (“the city council spends money on robots while basic services are lacking”).

- Organizational inertiaWith busy daily schedules, it's difficult for anyone to find time to try something new.

- Internal transparency: explain from the beginning what AI does and does not do.

- Light and applied training: not generic courses, but short sessions on the use case that will be piloted.

- Start smallA limited pilot reduces resistance and gives confidence.

- Explicit human supervision: emphasize that the final decision is always made by one person.

- Early measurement: show clear indicators of time saved or incidents resolved over several weeks.

AI doesn't replace civil servants: it gives them back time for what's important.

These are the keys that have allowed us to transform resistance into collaboration. Only with small, measurable steps can we build the trust necessary to take the leap to more ambitious projects.

Cultural change in a city council is achieved through small, visible successes, not through long speeches.

5. From theory to practice: the 90-day pilots

After years of strategic plans that never made it past the drawing board, the most reliable way forward is Test soon and measure. A 90-day pilot allows us to demonstrate value without large budgets or long-term commitments, with defensible results in just a few weeks.

- Manageable timeframe — no one perceives it as an “eternal project”.

- Clear metrics — time saved, issues resolved, satisfaction.

- Political fit — three months offer results in the same exercise.

Basic structure of a 90-day pilot

Use case selection

We chose a specific, repetitive and visible problem (e.g., incident classification or citizen services).

Minimum data preparation

We organize the essential information (files, incident logs, official documentation) and define access.

Supervised implementation

We implement the AI tool in a limited environment, always with explicit human supervision.

Early measurement

We track simple indicators from the first month: average response time, % of correct classification, overflow handled.

Evaluation and decision

With the data on the table, we decided: climb, adjust o rule out. No dogmas, just results.

- Clear objective from the beginning.

- Reduced team but committed (technical + political representative + care reference).

- Transparent communication to the citizens and staff.

- Human supervision unequivocal at all times.

90-day pilots are not a laboratory: they are the shortcut to going from PowerPoint to real results.

| Classical approach | 90-day pilot |

|---|---|

| Lengthy diagnosis, extensive documents, diffuse ROI. | Defined problem, clear deliverables, early ROI. |

| Strong dependence on suppliers and committees. | Small team with autonomy and human supervision. |

| Horizon 12–24 months. | Visible results in 8–12 weeks. |

6. What should any city council audit before starting?

Before launching a pilot, we do a quick audit to avoid friction and surprises. These are simple checks that tell us if we can start now or if it's better to get our house in order first on four fronts: data, processes, people e infrastructure/security.

- Minimum traceable data — we know where they are (files, incidents, regulations) and who has access permission.

- “Official” source” — there is a repository or document that we consider the reference (avoid contradictory versions).

- Defined process — there is a “how to do it today” even if it is brief; if not, we write it on a page.

- Clear responsibilities — a technical person and a political person designated to make decisions during the pilot.

- Controlled environment — we can run the pilot with test accounts and without touching critical systems.

| Area | Control question | Warning sign | Action in the 1st week |

|---|---|---|---|

| Data | Do we have the basic data in one accessible place? | Files scattered by mail or personal folders. | Create a common folder and a list of sources with date and responsible party. |

| Quality | Do we know the “truth” when there are duplicates or versions? | Two official documents with different figures. | Choose the official source and note the tie-breaking criterion. |

| Process | Is the current workflow (steps, who, when) written out? | Oral dependency: “Only So-and-so knows.”. | Document the "as is" process in 1 page, including those responsible. |

| People | Are there any assigned references (technical + political)? | Nobody with the time or a clear mandate to decide. | Name 2 role models and reserve 30–45 minutes per week. |

| Security | Can we work in a test environment without sensitive data? | Direct access to critical systems is requested. | Create test credentials and an anonymized dataset. |

7. Conclusion and next step

The AI in city councils It's no longer a futuristic idea; it's a practical tool. Having experienced traffic jams firsthand, we know that the difference lies not in having a grand plan, but in... Start small and measure.. The 90-day AI pilots They are the safest way to move from talk to results.

Administrative modernization is built with short tests, clear metrics, and human oversight, not with five-year promises.

- A use case in limited production (citizen services, files or incidents) with human supervision.

- Operational metrics (time saved, % correct classification, response times).

- Legal and risk checklist aligned with the AI Act to be able to scale safely.

- Informed decision: scale, adjust or close — without dogma, with data.

Would you like us to notify you when we publish new content?

We value your time. We'll only send you articles, guides, or tools that help you improve, make better decisions, or take better action.

Our practical resources and online tools

Practical AI Checklist with ROI: Where to Start

Is your company ready to implement artificial intelligence with real returns? This checklist will help you.

Checklist for SMEs: Identify roadblocks that are holding you back

Find out if your SME is ready to grow with our free checklist. Evaluate 4 key areas

Checklist: Ideas or Noise?

In 20 quick questions you can discover if your company turns ideas into results or into

Checklist Route, Direction and Results: proprietary methodology

Discover in 15 questions if your company needs the Ruta R&R methodology to prioritize and optimize.

Checklist for aligned or parallel equipment

Find out in 12 questions if your team is truly aligned with the company's goals

Marketing strategy checklist: go-to-market strategies

Evaluate in 16 questions whether your marketing plan is solid and aligned with your

Checklist for agility in large companies: reduce time in

Evaluate your large company's agility with this checklist. Discover if bureaucracy or the

Lead Scoring Checklist

Evaluate in 5 minutes how aligned your teams are and if your scoring system is working.

Digital maturity checklist: optimization of digital resources

Discover in 15 questions if your company is digitally ready for growth. Evaluate channels, processes, and data.

Startup checklist: detect roadblocks that hold you back

Is your startup on the right track? Find out with our checklist if you need to strengthen your strategy and metrics.

Practical AI Checklist with ROI

Is your company applying artificial intelligence profitably or just testing tools aimlessly? With

Commercial diagnostic checklist: Identify where the blockage is.

Evaluate in 16 questions whether your business strategy, sales, marketing, data, and team are driving your success.